In a world increasingly mediated by algorithms, a silent crisis is unfolding. From hiring platforms to healthcare diagnostics, artificial intelligence (AI) is making pivotal decisions that shape human lives. Yet, beneath the veneer of mathematical objectivity, these systems often perpetuate and amplify deep-seated societal biases, creating a new digital frontier of inequality for women and racial and ethnic minorities. This is not a malfunction; it is often a mirror, reflecting historical and contemporary prejudices baked into the very data and design choices that bring AI to life.How AI Systems Reinforce Bias Against Women and Minorities

The Myth of Neutrality: Understanding the Roots of Algorithmic Bias

The core misconception fueling this issue is the “myth of neutrality.” The popular imagination often views algorithms as cold, impartial mathematical processes. In reality, AI is a product of human creation, trained on human-generated data, and deployed in human social contexts. Its objectivity is only as pure as the inputs and intentions behind it. Bias in AI typically arises from three interconnected sources:

- Biased Data Sets: AI models learn patterns from historical data. If that data reflects societal inequalities—like gender pay gaps, racial profiling in policing, or underrepresentation in certain fields—the AI will learn to replicate and codify those patterns. A hiring algorithm trained on a decade of resumes from a male-dominated tech company will learn to prefer male candidates. A facial recognition system trained primarily on lighter-skinned faces will fail to accurately identify people with darker skin.

- Biased Design and Framing: The questions asked by developers shape the answers the AI provides. If a predictive policing algorithm is designed to “optimize patrols in high-crime areas” using historical arrest data, it will inevitably target minority neighborhoods already subject to over-policing, creating a vicious feedback loop. The bias is in the objective itself.

- Biased Deployment and Interpretation: Even a relatively sound algorithm can cause harm if deployed without understanding its limitations or local context. A healthcare algorithm used to allocate care management resources, famously documented in a 2019 Science study, prioritized white patients over sicker Black patients because it used historical healthcare costs as a proxy for need, ignoring unequal access to care.

Concrete Harms: Case Studies in Gendered and Racialized Bias

The theoretical risks manifest in deeply personal and systemic harms across critical domains.

In Employment and Economic Mobility: AI-driven hiring tools have been caught penalizing resumes containing the word “women’s” (as in “women’s chess club”) or graduating from women’s colleges. Video interview analysis software, claiming to assess candidate suitability via tone and facial expressions, has been criticized for enforcing narrow, culturally specific norms of “confidence.” For minorities, the resume screen is often the first algorithmic gatekeeper. Names perceived as Black or Hispanic can trigger lower ranking scores, a digital replication of proven in-person discrimination. Furthermore, gig economy platforms use opaque algorithms to assign work, set pay, and manage performance. Studies suggest these systems can disadvantage women and minorities through route allocation, rating biases from customers, and a lack of transparency in dispute resolution.

In Finance and Housing: Algorithmic credit scoring can deepen financial disparities. By incorporating alternative data like shopping habits or social network connections, these models can create “digital redlining.” A person’s zip code—a longstanding proxy for race in the U.S.—or their associations online can unfairly lower their credit access. Mortgage approval algorithms risk perpetuating historical discrimination if trained on data from an era of explicit redlining. For women, especially in economies with financial data gaps, the lack of traditional credit history can lead to unfair assessments by algorithms demanding extensive digital footprints.

In Healthcare: A Matter of Life and Death: Bias here is particularly alarming. As mentioned, diagnostic algorithms can perform poorly on underrepresented groups. A seminal study found that an algorithm used to detect skin cancer was less accurate on darker skin because its training dataset was overwhelmingly composed of light-skinned individuals. Similarly, pulse oximeters, crucial tools in emergencies, have been shown to be less accurate on people with darker skin, a hardware bias with software implications. Women’s health complaints, historically often dismissed as “hysterical” or “emotional,” face a new threat: AI systems trained on medical literature and records skewed toward male physiology may misdiagnose female patients. Heart attack prediction models, for instance, have been less effective for women because the “typical” symptoms were based on male presentation.

In Criminal Justice and Surveillance: This area presents some of the most visceral dangers. Predictive policing algorithms have been shown to target Black and Latino neighborhoods relentlessly, not because crime is inherently higher, but because past biased policing created the data that trains the system. This leads to more patrols, more arrests, and a reinforcement of the biased data. Risk assessment tools used in bail and sentencing, such as the notorious COMPAS algorithm, have been found to falsely flag Black defendants as future criminals at nearly twice the rate as white defendants. Facial recognition technology, aggressively marketed to law enforcement, has consistently demonstrated abysmal accuracy rates for women of color, leading to wrongful arrests and harassment. For example, in 2020, a Black man in Michigan was wrongfully arrested after a facial recognition match, a technology the ACLU found misidentified members of Congress of color 39% of the time.

In Everyday Technology and Representation: Bias is embedded in the tools we use daily. Early voice assistants like Siri and Alexa, designed with default female voices and subservient personas, reinforced stereotypes of women as helpful, obliging secretaries. They famously struggled with commands to find abortion providers or respond to sexual harassment. Computer vision systems powering image search have returned biased results: searching for “professional hairstyle” yielded almost exclusively white women, while “unprofessional hairstyle” showed Black women with natural hair. Automatic photo-tagging has misidentified Black people as “gorillas” or “apes.” These are not mere glitches; they are deeply alienating experiences that exclude minorities from the digital sphere.

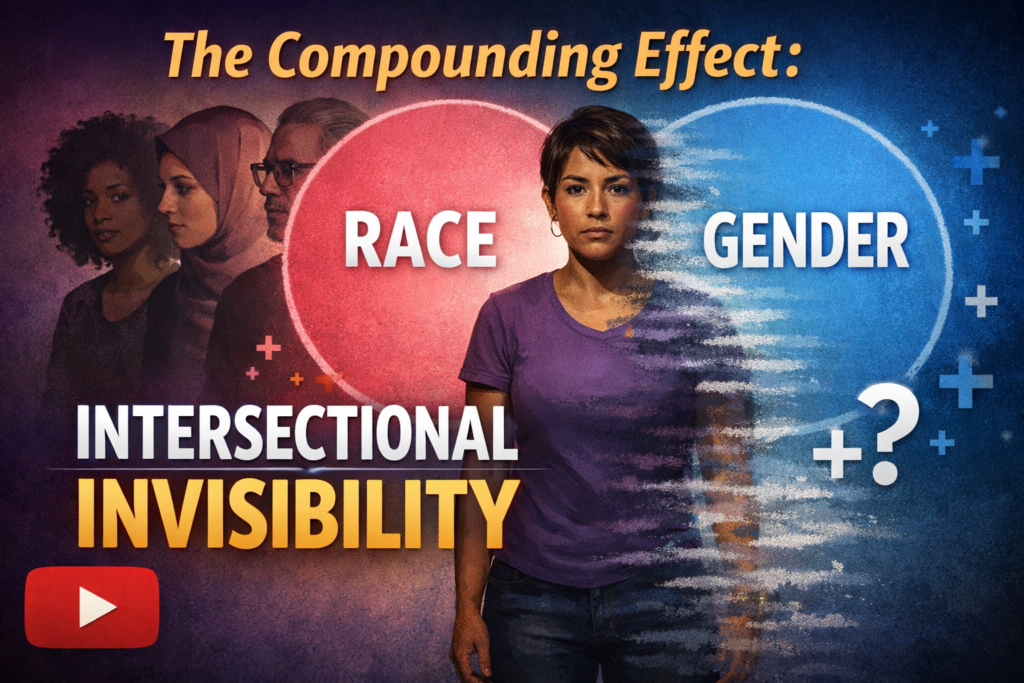

The Compounding Effect: Intersectional Invisibility

The harm is most acute at the intersection of identities. An AI system may be evaluated for bias against “women” and bias against “Black people,” but rarely is it tested specifically for bias against Black women. The unique discrimination they face—in healthcare (higher maternal mortality), in hiring (penalized for natural hair and speech patterns), and in public space (hyper-surveillance)—can be rendered invisible by a myopic, single-axis approach to bias testing. This intersectional gap is one of the most significant blind spots in current AI ethics frameworks.

The Path Forward: From Diagnosis to Cure

Addressing this systemic issue requires a multi-stakeholder, holistic approach that moves beyond technical quick fixes.

1. Diversify the AI Pipeline: The stark homogeneity of the AI workforce—overwhelmingly male, white, and East Asian—is a root cause. Diverse teams are more likely to spot flawed assumptions, question biased data, and design for a wider range of experiences. Investment in STEM education for girls and minorities is a long-term necessity.

2. Audit, Audit, Audit: We need rigorous, independent algorithmic auditing. This includes pre-deployment audits of training data for representativeness and proxies for sensitive attributes. Ongoing impact assessments after deployment are crucial to catch emergent biases. Tools like “model cards” and “datasheets for datasets,” which document a model’s intended use, limitations, and performance across demographics, should become standard practice. The EU’s proposed AI Act, with its risk-based framework and fundamental rights impact assessments, points toward a regulatory future.

3. Develop Bias-Aware Technical Solutions: Researchers are actively working on technical mitigations: de-biasing data through curation and synthesis, developing fairness-aware algorithms that explicitly constrain models to minimize disparity, and employing adversarial techniques where one part of a model tries to detect and remove bias from another. However, these are tools, not silver bullets, and must be applied with socio-technical understanding.

4. Enforce Robust Regulation and Legal Accountability: Clear legal frameworks are needed. Concepts of algorithmic transparency (right to explanation) and non-discrimination must be updated for the algorithmic age. Agencies like the U.S. Federal Trade Commission have begun acting against biased AI under existing consumer protection laws, signaling that “black box” algorithms are not a shield from liability. Laws must empower individuals to challenge consequential algorithmic decisions.

5. Center Participatory and Community-Led Design: Those most impacted by biased systems must have a seat at the design table. Participatory design, where community organizations co-create or provide essential feedback on AI tools meant for their use, is vital. This ensures that systems address real needs and do not simply automate oppression.

Conclusion: A Choice of Futures

The bias in our artificial intelligence is a stark reflection of the unresolved biases in our societies. It presents a profound choice. We can allow these tools to hardwire historical injustice into the infrastructure of our future, creating a world where inequality is automated, scaled, and rendered invisible behind a screen of code.

Or, we can seize this moment as a catalyst for a deeper reckoning. By confronting algorithmic bias, we are forced to confront its human sources. The hard work of auditing data, diversifying teams, and enacting smart regulation is not just about fixing machines; it is about rebuilding systems with equity and justice as foundational principles.

The goal cannot be merely “unbiased” AI—a statistical ideal detached from context. The goal must be equitable AI: systems actively designed to identify and counter structural inequality, to uplift the marginalized, and to distribute opportunity and dignity justly. The technology we build today will shape the society of tomorrow. The choice between perpetuating old wounds and healing them is, quite literally, in the code.