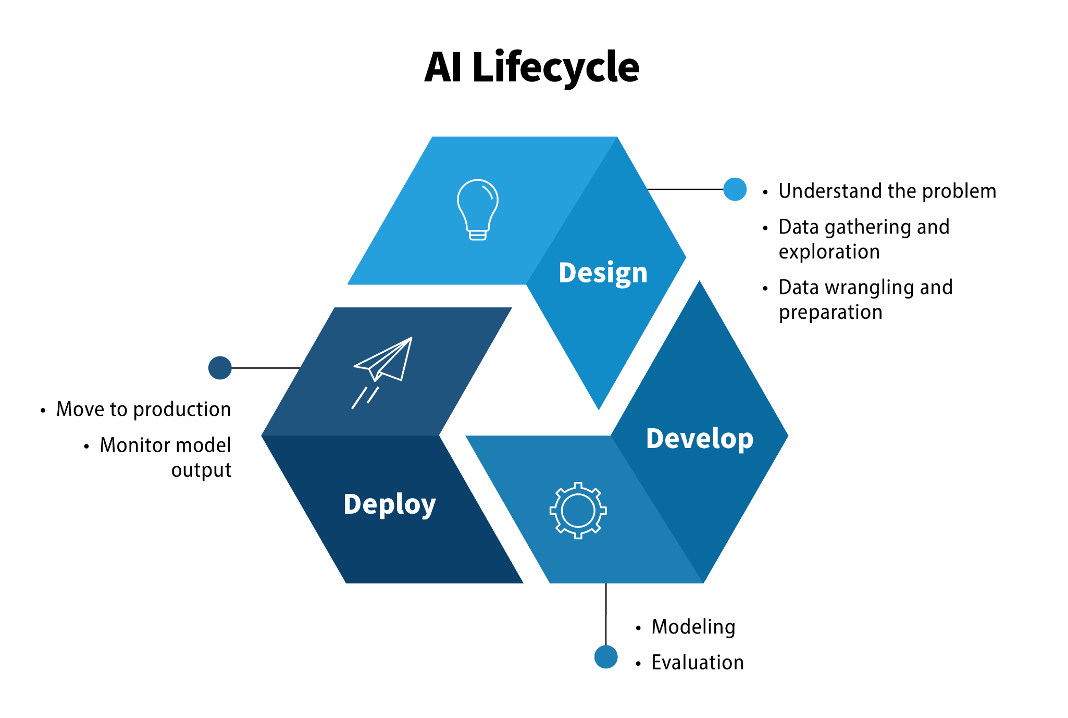

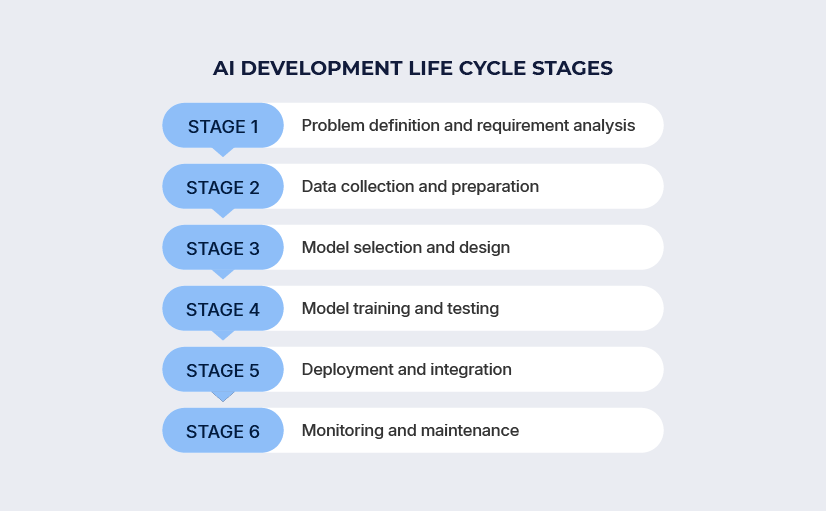

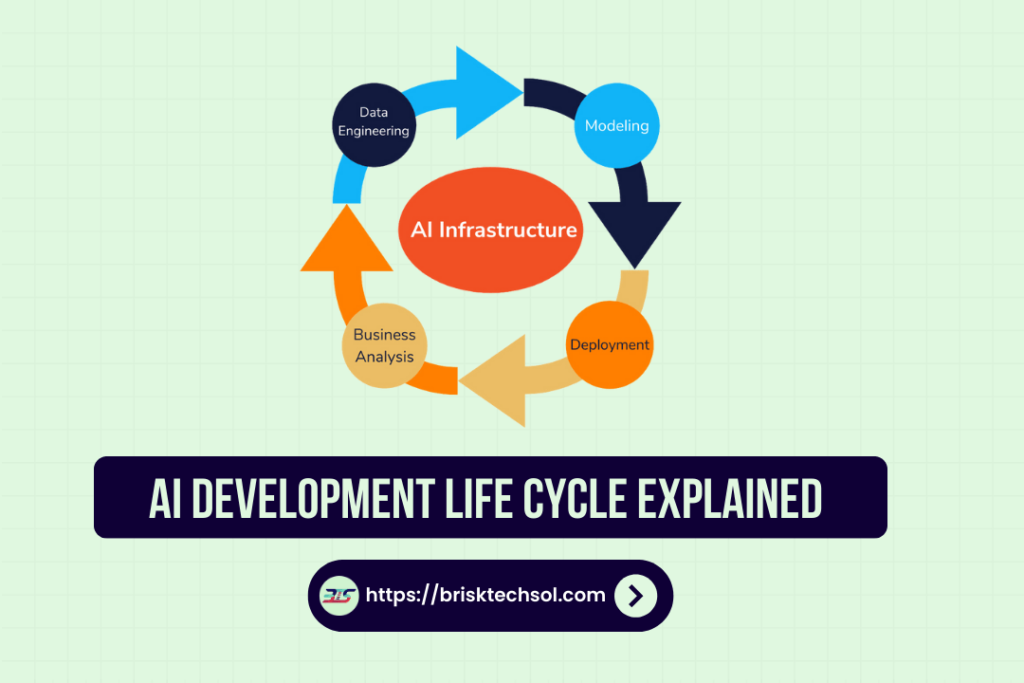

The creation of Artificial Intelligence (AI) is often portrayed as a mystical act of digital genesis, a realm inhabited by mathematicians whispering to silicon. In reality, it is a profoundly human endeavor of engineering, requiring not just genius but a sophisticated and layered toolkit. Building a functional, robust, and valuable AI system is less about a single brilliant algorithm and more about the meticulous orchestration of diverse tools across a complex lifecycle. This article maps the essential tool categories required for contemporary AI development, moving from the raw material of data to the final deployed intelligence, and explores the human expertise that binds them together.Essential Tools for AI Development Lifecycle

I. The Foundational Quarry: Data Tools

Before any learning can begin, there must be something to learn from. Data is the ore from which intelligence is smelted, and its procurement and refinement are the first and often most labor-intensive stages.

1. Data Acquisition and Generation Tools: AI systems require vast, relevant datasets. Tools here are varied:

- Web Scraping Frameworks (e.g., Scrapy, Beautiful Soup): For programmatically collecting data from public websites, though their use must be tempered with respect for

robots.txtand legal boundaries. - Public Dataset Repositories (e.g., Kaggle Datasets, Hugging Face Datasets, UCI Machine Learning Repository): Providing pre-collected, often cleaned datasets for experimentation and benchmarking.

- Synthetic Data Generation Tools (e.g., Synthesized, Mostly AI, CVAT): Crucial for scenarios where real data is scarce, expensive, or privacy-sensitive (e.g., medical imaging, autonomous driving edge cases). These tools use techniques like Generative Adversarial Networks (GANs) or simulation environments to create realistic, labeled data.

- APIs (Application Programming Interfaces): The conduits for tapping into streams of live or batched data from other services, such as social media platforms, financial markets, or IoT sensor networks.

2. Data Labeling and Annotation Tools: For supervised learning, data must be tagged. A picture is just pixels until objects within it are identified.

- Specialized Software (e.g., LabelBox, Scale AI, Supervisely, CVAT): These platforms provide collaborative interfaces for human annotators to draw bounding boxes, segment pixels, transcribe audio, or classify text. They manage workflow, quality control, and label consistency across large teams.

- Active Learning Integration: Advanced tools use the AI model-in-training itself to identify which data points would be most informative to label next, dramatically improving labeling efficiency.

3. Data Storage and Management: The sheer volume of data necessitates robust infrastructure.

- Data Lakes (e.g., AWS S3, Azure Blob Storage, Hadoop HDFS): Store massive amounts of raw, unstructured data (images, logs, video) in their native format.

- Data Warehouses (e.g., Google BigQuery, Snowflake, Amazon Redshift): Store structured, processed data optimized for complex querying and analysis.

- Version Control for Data (e.g., DVC – Data Version Control, LakeFS): As essential as code versioning. These tools track changes to datasets, enabling reproducibility and allowing teams to roll back to specific dataset iterations alongside corresponding code versions.

4. Data Cleaning and Preprocessing Tools: Raw data is messy. This stage transforms it into a form digestible by algorithms.

- Pandas & NumPy (Python libraries): The workhorses for data manipulation, filtering, handling missing values, and numerical computation.

- Apache Spark: A distributed computing engine for preprocessing terabytes of data across clusters, far beyond the memory limits of a single machine.

- Trifacta, OpenRefine: GUI-based tools for visually exploring, cleaning, and transforming datasets without extensive programming.

II. The Laboratory: Algorithm Development & Training Tools

This is the heart of the AI workshop, where models are conceived, built, and educated.

1. Programming Languages & Core Libraries:

- Python: The undisputed lingua franca of AI/ML, prized for its simplicity, vast ecosystem, and powerful scientific libraries.

- R: Remains strong in statistical analysis and specialized research fields.

- Key Libraries: NumPy (numerical computing), SciPy (scientific computing), Pandas (data manipulation), Scikit-learn (the Swiss Army knife for classical ML algorithms like regression, clustering, and SVMs).

2. Deep Learning Frameworks: These are the foundational frameworks that provide the building blocks for neural networks, handling low-level tensor operations and automatic differentiation.

- TensorFlow (Google): Highly scalable, production-oriented, with a steep learning curve but immense power. Keras, now integrated, offers a user-friendly high-level API.

- PyTorch (Meta): Gained dominance in research due to its intuitive, Pythonic “define-by-run” dynamic computation graph, making debugging and experimentation more flexible. It has also made massive strides in production deployment.

3. Model Training Infrastructure: Training modern neural networks is computationally intensive.

- GPUs (Graphics Processing Units) & TPUs (Tensor Processing Units): Specialized hardware (from NVIDIA, Google, AMD) that performs the matrix multiplications central to deep learning orders of magnitude faster than standard CPUs.

- Cloud AI Platforms (e.g., AWS SageMaker, Google AI Platform, Azure Machine Learning): Provide managed, scalable environments where developers can spin up GPU-equipped instances on demand, avoiding massive capital expenditure on physical hardware.

- Distributed Training Frameworks: Tools like Horovod or framework-native features (

tf.distribute,torch.nn.parallel) that split training across multiple GPUs or even multiple machines, dramatically reducing training time for large models.

4. Experiment Tracking and Management: AI development is highly iterative. Keeping track of what was tried, with what parameters, and its resulting performance is critical.

- MLflow, Weights & Biases (wandb), Neptune.ai: These platforms log metrics, hyperparameters, code versions, and even model artifacts for every experiment run. They allow for comparison, visualization, and collaboration, turning ad-hoc experimentation into a reproducible, scientific process.

5. Specialized AI Toolkits: For specific sub-domains, specialized tools have emerged.

- Natural Language Processing (NLP): Hugging Face Transformers is a transformative library providing thousands of pre-trained models (like BERT, GPT) and datasets, making state-of-the-art NLP accessible. spaCy offers industrial-strength NLP for tasks like named entity recognition and dependency parsing.

- Computer Vision (CV): OpenCV is the fundamental library for image and video processing. Frameworks like Detectron2 (Facebook) provide high-quality implementations for object detection and segmentation.

- Reinforcement Learning (RL): OpenAI Gym (and its successor Gymnasium) provides standardized environments for developing and comparing RL algorithms. Stable-Baselines3 offers reliable implementations of key RL algorithms.

III. The Factory Floor: Deployment & Operationalization (MLOps) Tools

A model in a Jupyter notebook is a prototype. A model serving predictions in a live application is a product. This transition is the domain of MLOps.

1. Model Packaging and Serialization: The trained model must be exported from its training environment into a stand-alone, deployable artifact.

- Formats: ONNX (Open Neural Network Exchange) is an open format for interoperability between frameworks. Framework-specific formats include TensorFlow SavedModel, PyTorch TorchScript, and Pickle (for scikit-learn models, with security caveats).

2. Model Serving: Making the model available to receive input and return predictions via an API.

- Dedicated Serving Tools: TensorFlow Serving, TorchServe, and MLflow Models are purpose-built for high-performance, low-latency serving of ML models.

- Containerization: Docker packages the model, its dependencies, and a lightweight runtime into a standardized, portable container image.

- Orchestration: Kubernetes manages the deployment, scaling, and networking of containerized model services across clusters of machines, ensuring reliability and availability.

3. Pipeline Automation: The end-to-end process—from data ingestion, to preprocessing, to training, to deployment—needs to be automated and orchestrated.

- Apache Airflow, Prefect, Kubeflow Pipelines: These workflow schedulers allow you to define, schedule, and monitor complex ML pipelines as directed acyclic graphs (DAGs), ensuring consistent, repeatable model updates and retraining.

4. Monitoring and Observability: A deployed model is a living entity. Its performance can “drift” as the real-world data it encounters changes.

- Model Monitoring Platforms (e.g., WhyLabs, Fiddler, Arize AI): Track key metrics like prediction latency, throughput, and, crucially, data drift (changes in input data distribution) and concept drift (changes in the relationship between input and target). They provide alerts when model performance degrades in production.

IV. The Supporting Scaffold: Collaboration & Ancillary Tools

AI is a team sport, built within organizational and ethical contexts.

1. Code Development & Collaboration:

- Integrated Development Environments (IDEs): Jupyter Notebooks/Lab are indispensable for exploratory data analysis and prototyping. PyCharm and VS Code (with Python/ML extensions) are powerful for larger codebase development.

- Version Control: Git (with platforms like GitHub, GitLab, Bitbucket) is non-negotiable for tracking code changes, collaborating, and implementing CI/CD (Continuous Integration/Continuous Deployment).

2. Ethical AI & Explainability Tools: As AI impacts society, tools to ensure fairness, transparency, and accountability are moving from optional to essential.

- Explainable AI (XAI) Libraries: SHAP, LIME, and Captum help explain individual predictions by attributing importance to input features. Fairlearn and AI Fairness 360 provide metrics and algorithms to detect and mitigate bias in datasets and models.

3. Computing Environment Management:

- Package & Environment Managers: Conda and pip (with

virtualenvorvenv) manage Python packages and their versions, preventing dependency conflicts—a notorious problem in ML projects.

Conclusion: The Synergy of Tools and Mind

The modern AI toolkit is vast and stratified, reflecting the maturity of the field. It is no longer sufficient to be a brilliant algorithmist; one must also be a competent data engineer, a proficient software developer, and a thoughtful systems architect. The true “tool” required for artificial intelligence is, ultimately, a methodology—a disciplined approach that understands the lifecycle from data to deployment and knows which tool to apply at which stage.

The most elegant model will fail if built on rotten data. The most accurate model is worthless if it cannot be integrated reliably into a business process. Therefore, the contemporary AI practitioner’s expertise lies not in mastering every single tool, but in understanding the landscape: knowing that a data drift problem is solved by a monitoring tool like WhyLabs, not by tweaking hyperparameters; knowing that a collaboration bottleneck is solved by implementing MLflow, not by sending more emails.

These tools democratize access to powerful techniques, but they do not automate insight. They are the chisels, lathes, and forges of the digital age. The cathedral of artificial intelligence is built by the architects who wield them with purpose, ethics, and a clear vision of the world they intend to create. The tools are necessary, but the human mind—curious, critical, and responsible—remains the ultimate catalyst for intelligence, both artificial and organic.